In short, when comparing Dolby Vision vs HDR10, Dolby Vision offers a much higher image quality thanks to 12-bit color support and dynamic metadata, compared with the 10-bit, static metadata of HDR10. HDR10+ is then very similar to Dolby Vision, with this explored further below.

Both HDR10 and Dolby Vision are very commonly seen on TVs and monitors, but what do they really mean?

In this article, we’ll cover every difference between these HDR standards and help you to figure out precisely which one is right for you.

Dolby Vision vs HDR10

HDR10 is an open-source standard released by the Consumer Electronics Association in 2015, specifiying a wide color gamut and 10-bit color, and is the most widely used of the HDR formats.

It’s a pretty basic format that doesn’t really tell you much, only that the screen is able to display high dyanamic range images, with plenty of detail in darks and lights, and with good contrast. These monitors are very good for photo editing.

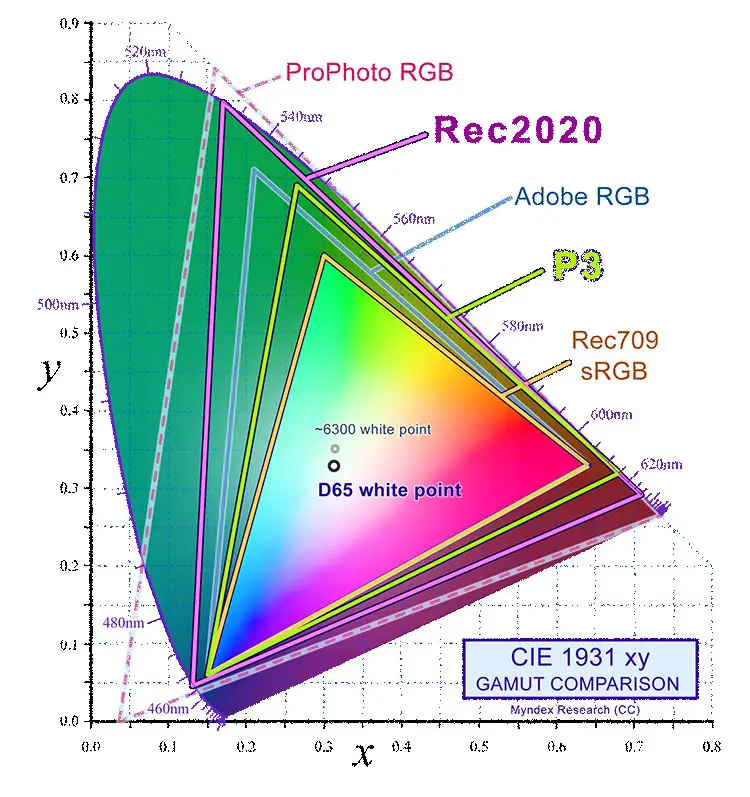

The color gamut of an HDR10 screen should follow the Rec. 2020 specification, but of course just because a monitor is following this gamut, doesn’t mean that it is able to display all of its colors.

(Source: Wikimedia Commons)

HDR10 directly competes with HDR10+, which adds dynamic metadata to allow correct dynamic range to be determined by the screen on a frame by frame basis, and Dolby Vision, which is similar to HDR10+, but is a more proprietary format.

Dynamic metadata is the key difference between HDR10 and Dolby Vision.

Standard HDR10 has static metadata. This means that the brightness is fixed at the start of the movie and remains at that level through to the end, and doesn’t change even if one scene is set in bright daylight and the next in a dark cave.

The brightness levels are set by the film studio during mastering, and typically mean that dark scenes don’t show true blacks and instead seem too light, because they can only use a very limited amount of the brightness range of your TV.

Dolby Vision gets around this by allowing the brightness to vary frame by frame.

Metadata embedded in the movie by the film studio allows the brightness range of the picture to be changed dynamically, moving the available gradations of brightness to better accommodate what is on screen.

This means that scenes in bright daylight and dark caves can have the brightness range tailored to those scenes, allowing for subtle brightness gradations and more detail in each scene.

HDR10+ is very similar to Dolby Vision in this respect.

HDR10 vs Dolby Vision Comparison

It’s easier to see the key differences between HDR10 and Dolby Vision laid out in a table.

| HDR10 | HDR10+ | Dolby Vision | |

|---|---|---|---|

| Bit Depth | 10-bit | 10-bit | 12-bit |

| Peak Brightness | 10,000 cd/m2 | 10,000 cd/m2 | 10,000 cd/m2 |

| Metadata | Static | Dynamic | Dynamic |

| Backwards Compatibility | None | With HDR10 | With HDR10 |

| Gaming Support | Consoles & PC | Only PC | XBox and PC |

| Streaming Support | All platforms | Prime Video, Paramount+ & Apple TV+ | All large platforms |

Let’s delve a bit deeper into these differences so that you can fully understand what they mean.

Bit Depth

Both HDR10 and HDR10+ are 10-bit standards (although HDR10+ can technically be pushed higher). Dolby Vision offers 12-bit color support right out of the box.

This diffference is significant on paper, with 10-bit displays capable of 1.07 billion colors, while 12-bit displays show a maximum of 68.7 billion colors.

But there are no current 12-bit displays on the market, and even if there were, there’s no real content available in 12-bit.

This means that you can largely ignore the bit depth differences for now, although this might be something worth revisiting in 5 or 10 years time.

Peak Brightness

All three standards have a max peak brightness of 10,000 nits, although all are generally mastered for the 1,000 – 4,000 nits range.

Again, current displays cannot sustain a 10,000 nit brightness, meaning that this is more for future-proofing than for current use.

Static or Dynamic Metadata?

The main difference between HDR10 and Dolby Vision is the static vs dynamic metadata.

Dynamic metadata allows the display to have its tone mapping and brightness changed on a frame by frame basis, giving better detail in darker scenes than with static metadata.

Backwards Compatibility

Both HDR10+ and Dolby Vision are backwards compatible with standard HDR content that only has static metadata.

HDR10 is not backwards compatible, but largely because there are no HDR formats below this.

HDR10+ can add dynamic metadata to an HDR10 source, up-rating it to HDR10+. How well this works depends on the TV manufacturer.

Dolby Vision builds its own dynamic metadata from a static metadata source, giving it potentially better backwards compatiblity than HDR10+.

Gaming Support

HDR10+ Gaming now gives HDR10+ support on PC, but you won’t find this on any consoles. Using a console with an HDR10+ TV will give a standard HDR10 image.

Dolby Vision has better console support, with the various recent XBox models supported, along with PCs.

HDR10 is widely supported among both consoles and PCs thanks to its older, simpler standard.

Streaming Support

For streaming movies, you are better to go with Dolby Vision, as many more platforms support this, including Netflix, Disney+ and Prime Video.

Of the larger platforms, only Prime Video supports HDR10+, not surprisingly as this is a standard co-developed by Amazon.

HDR10 is widely supported by all streaming platforms.

Note that not all TVs support both HDR10+ and Dolby Vision – most are either one or the other.

TCL, Hisense and Vizio are the main brands with support for both HDR10+ and Dolby Vision standards, while Samsung, a co-developer of HDR10+, only has HDR10+ support and LG and Sony support Dolby Vision only.

What is Better: HDR10 or Dolby Vision?

It’s clear than Dolby Vision offers much better naative performance than HDR10, with 12-bit color support and dynamic metadata, along the difference becomes much less when you also includ HDR10+.

Overall, neither is absolutely better than the other – it really comes down to whether you are in the Samsung ecosystem or LG and Sony.

Which Has More Content: Dolby Vision or HDR10+?

If you watch most content via a streaming service, then Dolby Vision offers much more content, although HDR10+ TVs should be able to upscale the standard HDR10 streams of most providers.

If you use Blu-Ray players, then either format will serve you well, as most studios release movies with HDR streams in both formats.

Of much more important is the TV that you get, with TCL, Hisense and Vizio making TVs that support both HDR10+ and Dolby Vision.

Dolby Vision vs HDR10 on Netflix

Dolby Vision offers a much superior image quality on Netflix than HDR10 thanks to dynamic metadata improving the contrast through tone mapping each individual frame.

Because Netflix doesn’t offer an HDR10+ stream, you cannot get native HDR10+ images and must rely on your TV to add dynamic metadata to the static HDR10 stream.

Can You Convert HDR10+ to Dolby Vision?

It’s not practical to convert HDR10+ to Dolby Vision right now, but it likely is technially possible if you are willing to put in a some legwork. A good resource for this is on Dolby’s Hybrik site.

Is Dolby Vision the Future?

Because Dolby Vision has 12-bit support, along with dynamic metadata, it can be thought of as being future-proofed. Competing standards are still some way behind Dolby Vision, along the proprietary format could mean that it is not widely adopted unless Dolby open it up to manufacturers on a much wider basis.

Read More:

![What is the Best Resolution for a 27 Inch Monitor? [SOLUTION]](https://www.lapseoftheshutter.com/wp-content/uploads/2022/05/best-resolution-for-27-inch-monitor-340x226.jpg)

Leave a Reply